News

You can read this as RSS feed.

Security Patches for "Copy Fail" and "Copy Fail 2 Dirtyfrag" Vulnerabilities

In the past week, two significant Linux kernel vulnerabilities, collectively known as Copy Fail and Copy Fail 2 Dirtyfrag, have been identified. Both flaws concern how the kernel handles memory and cache, posing a risk especially for multi-user systems and containerized environments.

What is it about?

This pair of vulnerabilities exploits logic errors in the Linux kernel that have been appearing in systems incrementally since 2017.

- Common Risk: Both flaws allow a local attacker to escalate privileges to the root level, gaining full control over the system.

- Differing Exploits: The distinction between them is primarily evident in the published methods of exploitation (exploits). While the first exploit demonstrates a path by modifying the

subinary, the second attacks through the manipulation of the/etc/passwdsystem file.

The impact is critical for:

- HPC Nodes: Where multiple users run tasks on a single machine.

- Containers & Cloud: Since the page cache is shared across the entire system, an attacker in one container can affect the entire host machine.

Current Status in MetaCentrum

Our administrators have taken immediate action to address the situation:

- Managed Machines (HPC, OpenStack Cloud, Kubernetes): All servers directly managed by MetaCentrum (front-end nodes, computing nodes, storage servers) have already been updated and rebooted. These systems are secure, and no action is required on your part.

- User-Managed Servers (OpenStack and VMware): If you operate your own instances in the cloud (OpenStack) or virtual servers in VMware where you have administrative (root) access, you must patch these machines yourself.

URGENT: MetaCentrum administrators do not have access to your private cloud instances. Please perform a kernel update and system reboot as soon as possible.

Links to common Linux distributions:

- Debian: Security Tracker CVE-2026-31431

- Red Hat Enterprise Linux: RHEL Security Portal

- SUSE: SUSE CVE Database

- Ubuntu: Ubuntu Security Notices

Ivana Křenková, Mon May 11 21:40:00 CEST 2026

COMPLETED: Skirit Frontend Upgrade and Introduction of "Lite" Version

Dear users,

A significant upgrade of the skirit frontend to new hardware has been completed. Simultaneously, a lightweight version, skirit-lite, is now available.

New skirit frontend: Full performance on new HW

The main frontend skirit.metacentrum.cz (alias skirit.ics.muni.cz, skirit.grid.cesnet.cz) is now running on new, more powerful hardware. This is the primary address we recommend using for all new connections.

New skirit-lite frontend for lightweight tasks

The original hardware of the skirit frontend is not being retired but is changing its role. Under the new name skirit-lite.metacentrum.cz, it will serve as a "light" frontend (alias skirit-lite.ics.muni.cz).

-

When to use skirit-Lite? It is ideal for quick job management, script editing, or checking the status of calculations.

-

When to use standard Skirit? Use it for more demanding interactive work and operations requiring higher computing power on the frontend itself.

Important migration information

SSH key changes: In connection with the renaming and hardware replacement, your SSH client may warn you about a change in fingerprints (host keys). This is expected behavior in this case. You can verify the list of SSH keys in the documentation: https://docs.metacentrum.cz/en/docs/access/security/connect-auth

Ivana Křenková, Thu May 07 21:40:00 CEST 2026

Significant upgrade of disk array storage-praha5-elixir

We have successfully completed the planned upgrade of the storage-praha5-elixir storage array. Thanks to this investment, the total available capacity has grown by an impressive 1 PB.

This increase will primarily benefit users involved in ELIXIR projects, as well as other MetaCentrum users utilizing our storage capacities in Prague.

What’s New?

-

Home Directories (home): For ELIXIR users, we have tripled the available space. The standard quota is now set to 5 TB (further increases are possible in justified cases). Other users have 100 GB available.

-

Project Directories: The capacity reserved for ELIXIR projects has doubled.

-

Future Flexibility: A portion of the new space remains intentionally unallocated for now. This will allow us to respond flexibly to your future needs and "inflate" specific directories wherever the demand for data is currently highest.

The storage is available as standard on all MetaCentrum computing nodes at the usual path: /storage/praha5-elixir/

We believe that this new hardware will contribute to the efficient execution of your computations and scientific projects.

Ivana Křenková, Tue Apr 28 21:40:00 CEST 2026

Significant upgrade of disk array in České Budějovice

We are pleased to announce the completion of a significant upgrade to the disk array in the České Budějovice location.

This modernization brings not only a massive increase in available capacity but also higher throughput thanks to the replacement of the control hardware.

What has changed?

- Capacity increase: We have expanded the total storage volume from the original 150 TB to 1 PB (petabyte).

- Frontend modernization: The hardware has been replaced with significantly more powerful components, ensuring faster response times and higher stability during data-intensive operations.

- Increased user quotas: In connection with the array expansion, we have increased the basic disk quota (soft quota) for every MetaCentrum user on this array from the original 100 GB to 3 TB.

Technical parameters:

| Parameter | Original state | New state |

|---|---|---|

| Total array capacity | 150 TB | 1 PB |

| User disk quota | 100 GB | 3 TB |

| File count quota | 1M | 1M (unchanged) |

| Frontend Hardware | Standard | High-performance HW |

| Access path | /storage/budejovice1 | |

How to use the storage?

The array is available as standard on all MetaCentrum computing nodes at the usual path: /storage/budejovice1

We believe that this new hardware will contribute to the efficient execution of your computations and scientific projects.

Ivana Křenková, Tue Apr 28 21:40:00 CEST 2026

New Clusters in MetaCentrum: Infrastructure Expansion with CESNET and CERIT-SC Resources

We are pleased to announce the successful integration of new computing clusters owned by CESNET and CERIT-SC into the MetaCentrum infrastructure. This expansion brings increased capacity for both CPU and GPU calculations.

Below are the technical specifications of the new machines.

1. Cluster hildor (CESNET)

Owner: CESNET (hildor[1-20].metacentrum.cz, deimos[1-13].meta.zcu.cz, haldan[1-15].metacentrum.cz), České Budějovice, Plzeň, Brno

Capacity: 48 nodes / 6 144 CPU cores

Node configuration:

- CPU: 2x AMD EPYC 9555 (64 cores per node)

- RAM: 768 GiB

- Home: 2x 1,92 TB NVMe

- Net: 10 nebo 25 Gbit/s + Infiniband HDR200

- Node performance: SPECrate 2017_fp_base 1700

The clusters are intended for computationally intensive tasks utilizing CPUs, and do not include GPU acceleration.

2. Cluster grogu (CERIT-SC)

Owner: CERIT-SC (grogu[1-3].cerit-sc.cz, grogu[4-8].cerit-sc.cz), Brno

Capacity: 8 nodes / 768 CPU cores / 12 GPUs

Node configuration:

- CPU: 2x AMD EPYC 9454 48-Core Processor (96 cores per node)

- GPU: 4x NVIDIA RTX PRO 6000 Blackwell Server Edition (

grogu[1-3]) - RAM: 1536 GiB

- Home: 6x 7 TB NVMe

- Net: 100 Gbit/s

- Node performance: SPECrate 2017_fp_base 445

The clusters are intended for computationally intensive tasks utilizing CPUs, nodes grogu[1-3]include 4x GPU accelerators.

A complete list of available computing servers and their current utilization can be found here: MetaCentrum Hardware

We believe this new hardware will contribute to the effective implementation of your calculations and scientific projects.

Ivana Křenková, Tue Mar 31 21:40:00 CEST 2026

Skirit Frontend Upgrade and Introduction of "Lite" Version

Dear Users,

As part of our ongoing infrastructure improvements at MetaCentrum, we have completed a major upgrade of the Skirit frontend. To ensure a more stable and powerful environment for your work, we are migrating to new hardware and introducing a specialized lightweight version.

New Skirit: Full Power on New Hardware

The main frontend skirit.metacentrum.cz (alias skirit.grid.cesnet.cz) is now running on brand-new, high-performance hardware. This is the primary address we recommend for all new sessions.

New Feature: Skirit-Lite for Lightweight Tasks

The original Skirit hardware is not retiring; instead, it is transitioning into a new role. Under the new hostname skirit-lite.metacentrum.cz, it will serve as a "lightweight" frontend.

- When to use Skirit-Lite? Ideal for quick job management, script editing, or checking job status.

- When to use Standard Skirit? Recommended for demanding interactive work and operations requiring higher computational power directly on the frontend.

Important Migration Details

- Do not start new sessions on the old address: A nologin mode will soon be activated on the old machine (skirit.ics.muni.cz). Please direct all new logins exclusively to skirit.metacentrum.cz.

- Finish your current work: Existing active sessions on the old hardware will be allowed to finish. However, once they end, you will no longer be able to log back into that specific instance using the old hostname.

- Planned Reboot: Within the next few days, the old machine will undergo a reboot for software upgrades and will be officially renamed to skirit-lite.ics.muni.cz.

- SSH Key Changes: Due to the hardware swap and renaming, your SSH client may warn you about changed host keys. This is expected behavior in this case.

- The work on the frontends will otherwise remain unchanged for users, and home directories will be preserved without any changes.

Ivana Křenková, Tue Mar 24 21:40:00 CET 2026

Enabling Encryption for Interactive Jobs

Dear Users,

During this week, we will enable encryption for interactive jobs. This step is essential to enhance the security of data transmission and communication within the MetaCentrum computing environment.

For you as users, this update brings two practical changes that we would like to bring to your attention:

1. Kerberos Ticket Verification upon Login

The system will now strictly require a valid Kerberos ticket.

-

If you do not have a valid Kerberos ticket at the moment the interactive job starts, the system will prompt you to renew it (enter password /

kinit) when you attempt to connect to the job. -

If your ticket is valid, the login process will proceed as usual without further interaction.

2. Connection Time Limit (Timeout)

We are introducing a security time limit for starting your work.

-

Once the interactive job starts on the cluster (a compute node is allocated), you have 3 hours to start interacting with the job.

-

If you do not connect to the running job within 3 hours, it will be automatically cancelled.

These measures help us maintain a secure and efficiently utilized infrastructure. Thank you for your understanding.

In case of any issues, please contact user support.

The MetaCentrum Team

Ivana Křenková, Mon Feb 09 21:40:00 CET 2026

Your Opinion Matters: Evaluate e-INFRA CZ Services

Are you computing with us? We want to hear from you.

To insure our computing and cloud services continue to meet the demands of your research, we need your input. Your feedback is crucial for our strategic planning. It helps us identify exactly which aspects of our infrastructure—from job scheduling to storage availability—need improvement or expansion.

If you have already completed the survey, thank you very much for your feedback.

- Privacy & Reward: The survey is anonymous by default. However, if you choose to provide your login, we will credit your MetaCenter account with the equivalent of 0.5 publications as a thank you for your time.

- Deadline: Please submit your responses by 14 February 2026.

- Link: User Satisfaction Survey

Ivana Křenková, Fri Jan 09 21:40:00 CET 2026

New Clusters in MetaCentrum: Infrastructure Expansion with CESNET and ZČU Resources

We are pleased to announce the successful integration of new computing clusters owned by CESNET and the University of West Bohemia (ZČU) into the MetaCentrum infrastructure. This expansion brings increased capacity for both CPU and GPU calculations, as well as a specialized SMP node with large shared memory.

Below are the technical specifications of the new machines.

1. Cluster adan (CESNET)

This cluster replaces the original cluster of the same name. It is designed for demanding CPU calculations and does not contain graphics accelerators. The cluster is already available in standard scheduler queues.

-

Owner: CESNET

-

Adress:

adan[1-48].grid.cesnet.cz -

Total capacity: 48 nodes / 6 144 CPU cores

-

Node configuration:

-

CPU: 2x AMD EPYC 9554 64-Core Processor

-

RAM: 768 GiB

-

Disk: 2x 3.84 TB NVMe

-

Net: 25 Gbit/s

-

Node performance: SPECrate 2017_fp_base: 1360

-

2. Cluster alfrid (ZČU)

The alfrid[1-9].meta.zcu.cz cluster underwent significant modernization and expansion in two phases (May and December 2025). It replaces the original hardware and now offers 8 GPU nodes and one SMP node.

a. GPU nodes (alfrid (4 nodes) a alfrid-II (4nodes)) These nodes are equipped with NVIDIA L40 and L40S accelerators, suitable for accelerated calculations and AI tasks.

-

Owner: ZČU Plzeň

-

Total capacity: 8 nodes / 1 024 CPU cores

-

Node configuration:

-

CPU: 2x AMD EPYC 9554 64-Core Processor

-

RAM: 1 536 GiB (alfrid) / 768 GiB (alfrid-II)

-

GPU: 2x NVIDIA L40 48GB (alfrid) / 4x NVIDIA L40S 48GB (alfrid-II)

-

Disk: 2x 7 TB NVMe

-

Net: 25 Gbit/s

-

Node performance: SPECrate 2017_fp_base: 1230

-

b. SMP node (alfrid-smp) A specialized node designed for tasks requiring a large amount of shared memory.

-

Owner: ZČU Plzeň

-

Adress:

alfrid-smp.meta.zcu.cz -

Capacity: 1 uzel / 128 jader CPU

-

Node configuration:

-

CPU: 2x AMD EPYC 9554 64-Core Processor

-

AM: 4 608 GiB (přibližně 4,5 TB)

-

Disk: 2x 7 TB NVMe

-

Net: 10 Gbit/s

-

Node performance: SPECrate 2017_fp_base: 1200

-

A complete list of available computing servers and their current utilization can be found here: MetaCentrum Hardware

We believe this new hardware will contribute to the effective implementation of your calculations and scientific projects.

Ivana Křenková, Fri Jan 09 21:40:00 CET 2026

Open Access Grant Competition IT4Innovations

|

||||||

|

||||||

|

Ivana Křenková, Mon Oct 20 21:40:00 CEST 2025

Feedback from the MetaCentrum 2025 Users' Seminar

On Thursday, October 2, 2025, the MetaCentrum 2025 High-Performance Computing Seminar took place at the Lávka Club in Prague, with more than 90 participants attending in person and another 40 joining online.

The program focused on data processing and storage, security, working with containers and the cloud, as well as the use of AI models on the MetaCentrum and CERIT-SC infrastructure. Experiences were shared not only by experts from CESNET and CERIT-SC, but also by users from research groups at Masaryk University and Charles University.

The seminar presentations are available on the event page. The same link will also host a video recording of the seminar once it has been edited.

Ivana Křenková, Fri Oct 03 21:40:00 CEST 2025

You're Invited: Basics of Quantum Machine Learning (IT4Innovations)

Dear users,

https://events.it4i.cz/event/354/

|

||||||

|

Ivana Křenková, Tue Sep 09 21:40:00 CEST 2025

You're Invited: MetaCentrum 2025 Users' Seminar

Dear users,

We cordially invite you to the MetaCentrum 2025 Seminar, taking place on Thursday, October 2, 2025, at Novotného lávka in Prague with a stunning view of Charles Bridge and the city center.

This seminar will focus on data processing, analysis, and storage, as well as introducing the latest developments in grid, cloud, and Kubernetes environments at MetaCentrum and CERIT-SC.

Program and more information:

https://metavo.metacentrum.cz/en/seminars/Seminar2025/index.html

Venue:

Lávka, Novotného lávka 201/1

110 00 Prague 1 – Staré Město

http://lavka.cz/

We look forward to seeing you there!

MetaCentrum

Ivana Křenková, Thu Aug 21 21:40:00 CEST 2025

New GPU Cluster in MetaCentrum

We are pleased to announce that a new computing cluster fobos.meta.zcu.cz has been successfully integrated into the MetaCentrum infrastructure.

Cluster Specification

- Number of nodes: 20

- Total CPU cores: 1920

- Configuration of each node:

- CPU: 2x AMD EPYC 9454 2.75GHz 48-core 290W Processor

- RAM: 768 GiB

- GPU: 4x NVIDIA L40S 48GB

- Disk: 4x 3.84 TB NVMe

- Network: Ethernet 100Gbit/s, InfiniBand 200Gbit/s

- Power (SPECrate 2017_fp_base): 1160

- Owner: CESNET

Access to Computing Resources

The cluster is available in regular queues.

A complete list of available computing servers can be found here: https://metavo.metacentrum.cz/pbsmon2/hardware

We believe that this new hardware will contribute to more efficient execution of your computations and scientific projects!

Ivana Křenková, Tue Aug 19 23:50:00 CEST 2025

BeeGFS: Fast Shared Scratch

We're pleased to announce the availability of a new fast shared scratch using the parallel distributed file system BeeGFS on our bee.cerit-sc.cz cluster. This new resource, available as scratch_shared, is specifically designed for high-performance computing (HPC) needs and offers several advantages for data-intensive and compute-intensive applications.

Why Use BeeGFS in MetaCentrum?

BeeGFS is ideal for demanding jobs that require:

- Working with large files or a huge number of small files – efficiently handle massive datasets, making it an ideal choice for applications that require fast and scalable storage.

- Utilizing many threads or processes that read or write in parallel – enables high-performance and concurrent access to data, making it perfect for applications that require simultaneous reads and writes.

- Spanning multiple compute nodes – can handle workloads that span multiple compute nodes, allowing for seamless scalability and performance.

- Sequential computations with intermediate results – well-suited for workflows where subsequent computations can pick up intermediate results left in the scratch directory, eliminating the need to copy data to permanent storage or run on the same machine as the previous step.

Typical Use Cases:

- High-Performance Computing (HPC) – BeeGFS is designed to efficiently handle large files and parallel input/output operations, making it an ideal choice for scientific computing workloads.

- Machine Learning and AI – With BeeGFS, you can train machine learning models faster by accessing large volumes of data with high-throughput and low-latency.

- Simulations, Rendering, Genomics, and Big Data Research – BeeGFS is perfect for handling massive datasets, such as those found in 3D rendering, complex simulations, genomic sequencing, and big data research.

More Information:

- Blog post: https://blog.e-infra.cz/blog/beegfs/

- Documentation: https://docs.metacentrum.cz/en/docs/computing/infrastructure/scratch-storages#shared-scratch-on-cluster-beecerit-sccz

Ivana Křenková, Fri Aug 08 23:50:00 CEST 2025

New clusters from ELIXIR project integrated in MetaCentre

We are pleased to announce that a new computing clusters elbi1.hw.elixir-czech.cz, elmu1.hw.elixir-czech.cz, eluo1.hw.elixir-czech.cz, elum1.hw.elixir-czech.cz operated by ELIXIR project, has been successfully integrated into the MetaCentre infrastructure. The clusters have very similar configurations and are located in different locations.

Clusters specification

|

elmu1.hw.elixir-czech.cz (2400 CPU, 25 uzlů ) - Cluster výpočetních strojů (MUNI Brno)

|

eluo1.hw.elixir-czech.cz (576 CPU, 6 uzlů ) - Cluster výpočetních strojů (UOCHB Praha)

|

elum1.hw.elixir-czech.cz (96 CPU, 1 uzel ) - Cluster výpočetních strojů (UMG Praha)

|

Access to Computing Resources

The cluster is available in the ELIXIR’s priority queues. The elbi1 cluster has 2 GPU cards and is also accessible in gpu queue. Other users can use the cluster in short regular queues with a limit of 24 hours.

A complete list of available computing servers can be found here: https://metavo.metacentrum.cz/pbsmon2/hardware

We believe that this new hardware will contribute to more efficient execution of your computations and scientific projects!

Ivana Křenková, Tue Feb 04 23:50:00 CET 2025

AI chat integration into documentation, WebUI with AI chat and new blog

we are pleased to announce that we have three new features for you:

- Documentation in a new design with integrated AI chat -- will be available soon!

- WebUI with integrated AI chat

- New blog

Documentation in a new design with integrated AI chat

Will be available soon! Our existing documentation https://docs.metacentrum.cz/ has undergone a visual upgrade.

While its structure remains unchanged, we've converted it to a new technology that supports AI chat integration (https://docs.metacentrum.cz/en/docs/tutorials/chat-help) to help you quickly find answers.

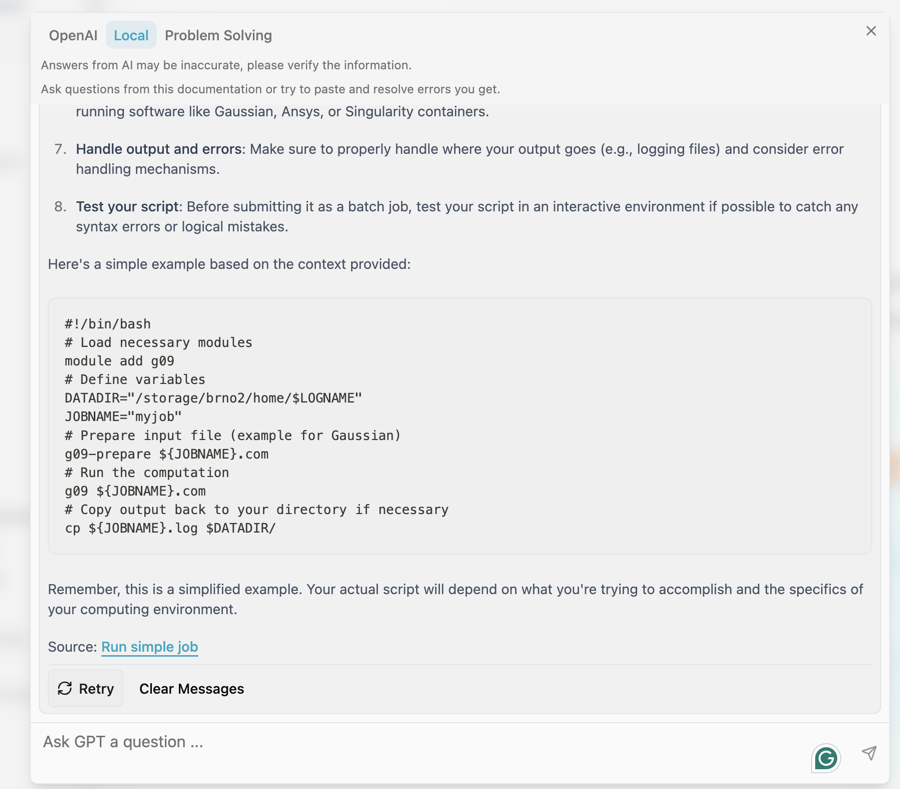

AI chat enables interactive search for information contained in the documentation. Select Local if you want a response based on the content of the documentation. The Problem Solving section solves the most common problems you may encounter. We will expand both sections on frequently asked questions.

We will be glad to receive your feedback. We will continuously improve the documentation based on your questions and comments.

Web UI with integrated AI chat

At the same time, we have launched a separate AI chat available at https://chat.ai.e-infra.cz/. There are several models available to try out, it supports drawing pictures and reading attached documents. The models run locally on our computing resources, so you don't have to worry about data leakage outside our infrastructure.

All models offered are also available via API and can be used for your projects. Documentation is available at

https://docs.cerit.io/en/docs/web-apps/chat-ai

Nový blog

You can find interesting facts about the chat and other topics related to infrastructure in our blog https://blog.e-infra.cz/

Ivana Křenková, Mon Mar 10 21:40:00 CET 2025

GRANT COMPETITION in IT4Innovations

Dear Users,

This competition is an excellent opportunity to gain priority access to support your upcoming projects. More detailed information can be found in the attached invitation.

|

|||||||||||||||||||

|

Ivana Křenková, Mon Feb 24 21:40:00 CET 2025

Change in Access to NVIDIA DGX H100 80GB (capy.cerit-sc.cz)

We would like to inform users about a change in access to the NVIDIA DGX H100 80GB (capy.cerit-sc.cz) computing system.

From now on, access will only be granted based on an approved request for computing time. The criteria and application process can be found here: Link to documentation.

If your computing requirements do not meet the specified criteria but you still need a GPU with large memory, you can use the GPU cluster bee (bee.cerit-sc.cz) with NVIDIA 2×H100 94GB. More information about this cluster can be found in the previous news post: Link to news.

Yours MetaCentrum

Ivana Křenková, Mon Feb 17 23:50:00 CET 2025

New Computing Cluster in MetaCentrum

We are pleased to announce that a new computing cluster farin.grid.cesnet.cz, operated by the Faculty of Civil Engineering at CTU in Prague, has been successfully integrated into the MetaCentrum infrastructure.

Cluster Specification

- Number of nodes: 4

- Total CPU cores: 512

- Configuration of each node:

- CPU: 2x AMD EPYC 9554 64-Core Processor

- RAM: 2304 GiB

- Disk: 14 TB NVMe

- Network: Ethernet 25 Gbit/s

- Performance (SPECrate 2017_fp_base): 1300

- Owner: Faculty of Civil Engineering at CTU in Prague

Access to Computing Resources

The cluster is available in the owner’s priority queue cvut@pbs-m1.metacentrum.cz. Other users can access the cluster in short regular queues with a time limit of up to 24 hours. Students and employees of the Faculty of Civil Engineering at CTU can request access to the priority queue.

A complete list of available computing servers can be found here: https://metavo.metacentrum.cz/pbsmon2/hardware

We believe that this new hardware will contribute to more efficient execution of your computations and scientific projects!

Ivana Křenková, Tue Feb 04 23:50:00 CET 2025

Migration of personal projects in MetaCentrum OpenStack Cloud

The migration of projects running in the e-INFRA CZ / Metacentrum OpenStack cloud Brno G1 [1] to the new environment Brno G2 [2], which took place during 2024, is approaching its final stage.

Migration of personal projects [3] will be possible from February 2025 and can be done by oneself.

The migration procedure will be updated during January 2025 on the website [2], [4].

We will keep you informed in more detail about the procedure and news on the homepage of G2 e-INFRA CZ / Metacenter OpenStack cloud [2].

Thank you for your understanding.

e-INFRA CZ / Metacentrum OpenStack cloud team

[1] https://cloud.metacentrum.cz/

[2] https://brno.openstack.cloud.e-infra.cz/

[3] https://docs.e-infra.cz/compute/openstack/technical-reference/brno-g1-site/get-access/#personal-project

[4] https://docs.e-infra.cz/compute/openstack/migration-to-g2-openstack-cloud/#may-i-perform-my-workload-migration-on-my-own

Ivana Křenková, Fri Dec 27 23:50:00 CET 2024

New SW available

Dear MetaCentrum Users,

We are pleased to announce several updates that will enhance your computing capabilities within our center. We look forward to helping you streamline your projects with state-of-the-art software and new services.

New Licenses for MolPro and Turbomole

MetaCentrum now offers new commercial licenses for **MolPro** and **Turbomole**, which are designed for quantum chemistry calculations. These tools enable users to perform detailed simulations and analyses of molecular systems with higher accuracy and efficiency.

- MolPro is a high-performance program for electronic structure calculations, particularly suited for advanced calculation theories such as Hartree-Fock or correlation theories.

- Turbomole is a comprehensive package for quantum chemical calculations, known for its efficiency in processing large systems. It allows for a wide range of calculations, including electron structure and molecule geometry optimization.

For more details on all software options available at MetaCentrum, please visit the following link: https://docs.metacentrum.cz/software/alphabet/

New Web Service Foldify

We are pleased to introduce the new service Foldify, which is now fully integrated into the Kubernetes environment. Foldify is a cutting-edge platform designed for protein folding in 3D space, known for its easy and user-friendly interface. This service significantly simplifies and streamlines the work of professionals in biochemistry and biophysics. It offers users a wide range of data processing options, as it supports not only the popular AlphaFold but also tools such as ColabFold, OmegaFold, and ESMFOLD.

You can discover and utilize the Foldify service at the following address: https://foldify.cloud.e-infra.cz/

Wishing you a peaceful Christmas and all the best in the New Year,

Your MetaCentrum

Ivana Krenkova, Mon Dec 23 23:50:00 CET 2024

New HW in MetaCenter

The MetaCenter has been recently expanded with two new powerful clusters:

1) Masaryk University (CERIT-SC) added 20 additional nodes with a total of 960 CPU cores and 32x NVIDIA H100 with 94 GB of GPU RAM suitable for AI-intensive computing.

- 10 nodes are made available in batch mode in MetaCenter - cluster bee.cerit-sc.cz,

- 8 nodes are available in Kubernetes / Rancher

- 2 nodes are in Sensitive Cloud for working with sensitive data.

2) The Institute of Physics of the Academy of Sciences added a new cluster magma.fzu.cz consisting of 23 nodes with 2208 CPU cores and 1.5 TB RAM each

Configuration and access

1) Cluster bee.cerit-sc.cz

There are 10 nodes involved in the MetaCenter batch system, with a total of 960 CPU cores and 20x NVIDIA H100, with the following configuration of each node:

| CPU | 2x AMD EPYC 9454 48-Core Processor |

|---|---|

| RAM | 1536 GiB |

| GPU | 2x H100 s 94 GB GPU RAM |

| disk | 8x 7TB SSD with BeeGFS support |

| net | Ethernet 100Gbit/s, InfiniBand 200Gbit/s |

| note |

Performance of each node is according to SPECrate 2017_fp_base = 1060 |

| owner | CERIT-SC |

The cluster supports NVidia GPU Cloud (NGC) tools for deep learning, including pre-configured environments, and is accessible in regular gpu queues.

We are also preparing a change in access the DGX H100 machine, which will remain in a dedicated queue gpu_dgx@meta-pbs.metacentrum.cz. It will be usable on demand and only by users who can prove that their jobs support NVLink and are able to use at least 4 or all 8 GPU cards at once. We will keep you posted on the upcoming change.

2) Cluster magma.fzu.cz

There are new 23 nodes involved in the MetaCenter batch system, with a total of 2208 CPU cores with the following configuration for each node:

| CPU | 2x AMD EPYC 9454 48-Core Processor CPU @ 2.7GHz |

|---|---|

| RAM | 1536 GiBidia |

| disk | 1x 3.84 NVMe |

| net | Ethernet 10Gbit/s |

| note |

The performance of each node is according to SPECrate 2017_fp_base = 1160 |

| owner | FZÚ AV ČR |

The cluster is accessible in the priority queue of the owner luna@pbs-m1.metacentrum.cz and for other users in short regular queues.

Complete list of the available HW: http://metavo.metacentrum.cz/pbsmon2/hardware.

Ivana Křenková, Mon Nov 18 23:40:00 CET 2024

Další kolo grantové soutěže v IT4Innovations Natinal Supercomputeing Center

Vážení uživatelé,

dovolujeme si přeposlat informaci o grantové soutěži v IT4I:

|

|||||||||

|

Ivana Křenková, Fri Oct 18 21:40:00 CEST 2024

Switching to the new OpenPBS and Debian12

Dear users,

At the beginning of March we first announced the launch of the migration to the new PBSPro -> OpenPBS.

- All available computing capacity will be available under a single PBS server pbs-m1.metacentrum.cz. We are continuing with moving the CERIT-SC (cerit-pbs) and ELIXIR CZ (elixir-pbs) clusters under the new PBS server.

- The existing PBSPro servers will be decommissioned when the remaining jobs are finished. Please move your jobs already to the new environment, it will make the migration easier and faster for us to complete the move.

- At the same time as the migration to the new PBS we are upgrading the OS: Debian11 -> Debian12.

Please use the new OpenPBS environment pbs-m1.metacentrum.cz for your tasks. If you don't want to change anything in your scripts, submit jobs from frontends with Debian12 OS, the queue names will remain the same, only the PBS server (QUEUE_NAME@pbs-m1.metacentrum.cz) will change.

The list of available frontends including the current OS can be found at https://docs.metacentrum.cz/computing/frontends/

About 3/4 of the clusters are now available in the new OpenPBS environment, we are working hard to reinstall the others. We are waiting for the jobs to run out.

Overview of machines with Debian12 feature: https://metavo.metacentrum.cz/pbsmon2/props?property=os%3Ddebian12

You can test whether your job will run in the new OpenPBS environment in the qsub builder: https://metavo.metacentrum.cz/pbsmon2/qsub_pbspro

For up-to-date information on the migration, see the documentation at https://docs.metacentrum.cz/tutorials/debian-12/ (we will update the migration procedure here).

Your MetaCenter

Ivana Křenková, Tue May 14 15:35:00 CEST 2024

Modifications in the Open OnDemand environment

Dear users,

We have made a change to the Open OnDemand (OOD) service that allows OOD jobs to be started on clusters that do not have a default home on the brno2 storage. Due to this change, the existing data, command history, etc., stored on brno2 will not be available in new OOD jobs if they are run on a machine with a different home directory.

To access the original data from brno2 storage, you must create a symbolic link to the new storage. The example below demonstrates setting up a symbolic link for the R program's history.

ln -s /storage/brno2/home/user_name/.Rhistory /storage/new_location/home/user_name/.Rhistory

Yours MetaCenter

Ivana Křenková, Mon May 13 15:35:00 CEST 2024

e-INFRA CZ Conference 2024

e-INFRA CZ Conference 2024, which tooke place on 29-30 April 2024 in Prague at the Occindental Hotel, visited 180 guests.

Presentations are available at the event page at https://www.e-infra.cz/konference-e-infra-cz

A video recording from the whole event will be available soon.

Ivana Křenková, Thu May 02 15:35:00 CEST 2024

Switching to the new PBS and OS Debian12

At the beginning of March we announced the start of the migration to the new PBSPro -> OpenPBS.

- Approximately half of the computing power is now available in the new environment managed by the pbs-m1.metacentrum.cz scheduler, while the existing meta-pbs.metacentrum.cz scheduler will stop accepting jobs longer than 4 days so that we can reinstall and move the remaining computing capacity. The cerit-pbs and elixir-pbs PBS environments are running unchanged for now.

- The existing PBSPro servers will be decommissioned in the future because they cannot directly communicate with the new OpenPBS servers and utilities.

- At the same time as the migration to the new PBS, an OS upgrade is underway: Debian11 -> Debian12.

If this has not already happened, please use the new OpenPBS environment pbs-m1.metacentrum.cz for your jobs. If you don't want to change anything in your scripts, submit jobs temporarily from the new zenith frontend or from the reinstalled nympha, tilia and perian frontends running in the new OpenPBS environment (already with Debian12 OS). The other frontends will be migrated gradually.

For a list of available frontends, including the current OS, see https://docs.metacentrum.cz/computing/frontends/

The new OpenPBS can also be accessed from other frontends; the openpbs module (module add openpbs) must be activated in such case.

Problems with compatibility of some applications with Debian12 OS are continuously solved by recompiling new software modules. If you encounter a problem with your application, try adding the debian11/compat module to the beginning of your startup script (module add debian11/compat). If problems persist (missing libraries, etc.), let us know at meta(at)cesnet.cz.

About half of the clusters are now available in the new OpenPBS environment, and we are working hard to reinstall the others. We are waiting for the jobs to run out. Overview of machines with Debian12 feature: https://metavo.metacentrum.cz/pbsmon2/props?property=os%3Ddebian12

You can test whether your job will run in the new OpenPBS environment in the qsub builder: https://metavo.metacentrum.cz/pbsmon2/qsub_pbspro

For up-to-date information on the migration, see the documentation at https://docs.metacentrum.cz/tutorials/debian-12/ (we will update the migration procedure here).

Ivana Křenková, Mon Apr 08 15:35:00 CEST 2024

e-INFRA CZ Conference 2024 invitation

Dear users,

We would like to invite you to participate in thee-INFRA CZ Conference 2024, which will take place on 29-30 April 2024 in Prague at the Occindental Hotel.

At the conference we will present e-INFRA CZ infrastructure, its services, international projects and research activities. We will introduce you to the latest news and outline the plans of the MetaCentre. The second day of the conference will bring concrete advice and examples of how to use the infrastructure.

The conference will be held in English.

For more information, agenda and registration, visit the event page at https://www.e-infra.cz/konference-e-infra-cz

We look forward to seeing you,

Yours MetaCenter

Ivana Křenková, Wed Mar 20 21:40:00 CET 2024

Open day for the launch of the OSCARS Open Call for Open Science Projects invitation

Dear users,

we are forwarding an invitation to Open day for the launch of the OSCARS Open Call for Open Science Projects

15 March 2024

|

|

Best regards,

Ivana Křenková, Wed Mar 13 23:40:00 CET 2024

MetaCentrum & CERIT-SC infrastructure news

Content

--------------------------------------

1) Switching to the new PBS and Debian12

We are preparing the transition to the new PBS - OpenPBS. Existing PBSPro servers will be decommissioned in the future because they cannot communicate directly with the new OpenPBS servers and utilities. At the same time as the migration to the new PBS we are upgrading the OS: Debian11 -> Debian12.

For testing purposes we have prepared a new OpenPBS environment pbs-m1.metacentrum.cz with new frontend zenith running on Debian12 OS:

- new frontend zenith.cerit-sc.cz (aka zenith.metacentrum.cz) running Debian12 OS

- new OpenPBS server pbs-m1.metacentrum.cz

- home /storage/brno12-cerit/

Gradually the new environment will be added to other clusters.

Overview of machines running Debian12: https://metavo.metacentrum.cz/pbsmon2/props?property=os%3Ddebian12

List of available frontends including the current OS: https://docs.metacentrum.cz/computing/frontends/

The new PBS can also be accessed from other frontends, but the openpbs module (module add openpbs) must be activated.

We are continuously solving compatibility problems of some applications with Debian12 OS by recompiling new software modules. If you encounter a problem with your application, try adding the debian11/compat module to the beginning of the startup script (module add debian11/compat). If problems persist (missing libraries, etc.), let us know at meta(at)cesnet.cz.

For more information, see the documentation at https://docs.metacentrum.cz/tutorials/debian-12/ (we will specify the migration procedure here).

2) Survey on satisfaction with MetaCentrum / e-INFRA CZ services

We would like to remind you of the opportunity to share with us your experience with computing services of the large research infrastructure e-INFRA CZ, which consists of e-infrastructures CESNET, CERIT-SC and IT4Innovations. Please complete the questionnaire by 8 March 2024. Your answers will help us to adjust our services to better suit you.

If you have already completed the questionnaire, thank you for doing so! We greatly appreciate it.

The questionnaire is available at https://survey.e-infra.cz/compute

3) Changes in the availability of commercial software (Matlab, Mathematica)

Matlab

We have acquired a new academic license for 200 instances of Matlab 9.14 and later (including a wide range of toolboxes), covering the computing environments of MetaCenter, CERIT-SC and IT4Innovations.

The new license comes with stricter conditions compared to the previous version. Please be aware that it is exclusively valid for use from MetaCenter/IT4Innovations IP addresses. Consequently, it cannot be utilized for running Matlab on personal computers or within university lecture rooms.

More information: https://docs.metacentrum.cz/software/sw-list/matlab/

Mathematica

Starting this year, MetaCentrum no longer holds a grid license for the general use of SW Mathematica (the supplier was unable to offer a suitable licensing model).

Currently, Mathematica 9 licenses are restricted to members of UK (Charles University) and JČU (University of South Bohemia) who have their own licenses for students and employees.

If you have your own (institutional) Mathematica software license, please contact us for more information at meta@cesnet.cz.

More information: https://docs.metacentrum.cz/software/sw-list/wolfram-math/

4) Available graphical environments (Chipster, Galaxy, OnDemand, Kubernetes/Rancher, Jupyter Notebooky, Alphafold)

Chipster

MetaCenter has recently made its own instance of the Chipster tool available to users athttps://chipster.metacentrum.cz/.

Chipster is an open-source tool for analyzing genomic data. Its main purpose is to enable researchers and bioinformatics experts to perform advanced analyses on genomic data, including sequencing data, microarrays, and RNA-seq:

- User-friendly interface that allows easy data manipulation and analysis.

- Ability to add and combine various modules for specific tasks.

- Numerous predefined analyses (over 500), such as differential gene expression, GO analysis, variant calling, and more.

- Optimized for efficient computations and handling large datasets.

- Integration with other bioinformatics tools and databases.

More information: https://docs.metacentrum.cz/related/chipster/

Galaxy for MetaCenter users

Galaxy is an open web platform designed for FAIR data analysis. Originally focused on biomedical research, it now covers various scientific domains. For MetaCentrum users, we have prepared two Galaxy environments for general use:

a) usegalaxy.cz

General portal at https://usegalaxy.cz/ mirrors the functionality (especially the set of available tools) of global services (usegalaxy.org, usegalaxy.eu). Additionally, it offers significantly higher user quotas (both computational and storage) for registered MetaCentrum users. Key features:

- Flexible tool supporting various data types and analyses. It can be used for bioinformatics, chemistry, physics, social sciences, and other fields.

- Workflow creation and sharing, allowing different steps (filtering, transformation, analysis, and data visualization) to be combined.

- Integration with other tools and libraries (Python, R, or SQL) for advanced analyses.

- Data visualization tools (graphs, maps, and animations).

More information: https://docs.metacentrum.cz/related/galaxy/

b) RepeatExplorer Galaxy

In addition to the general-purpose Galaxy, we offer our users a dedicated Galaxy instance with the Repeat Explorer tool. You need to register for the service.

RepeatExplorer is a powerful data processing tool that is based on the Galaxy platform. Its main purpose is to characterize repetitive sequences in data obtained from sequencing. Key features:

- enables the identification and analysis of repetitive sequences in the genome

- graphical clustering allows visualisation of repetitive sequences

- includes tools for detecting protein coding domains of transposable elements.

More information: https://galaxy-elixir.cerit-sc.cz/

OnDemand

Open OnDemand https://ondemand.grid.cesnet.cz/ is a service that allows users to access computational resources through a web browser in graphical mode. The user can run common PBS jobs, access frontend terminals, copy files between repositories, or run multiple graphical applications directly in the browser.

Some of the features of Open OnDemand include:

- Allows viewing and managing files in the home directory on the /storage/brno2 repository, as well as access to other MetaCenter repositories

- Displays a list of your running jobs on any PBS server, and new jobs can be created and started.

- Provides terminal access to skirit/zuphux/elmo front-ends

- Provides an interactive graphical user interface, including MetaCentrum Remote Desktop, ANSYS, MATLAB, Jupyter Notebooks, RStudio,..

More information: https://docs.metacentrum.cz/software/ondemand/

Kubernetes/Rancher

A number of graphical applications are also available in Kubernetes/Rancher https://rancher.cloud.e-infra.cz/dashboard/ under the management of CERIT-SC (Ansys, Remote Desktop, Matlab, RStudio, ...)

More information: https://docs.cerit.io/

JupyterNotebooks

Jupyter Notebooks is an "as a Service" environment based on Jupyter technology. It is a tool that is accessible via a web browser and allows users to combine code (mainly in Python), using Markdown output, text, math, calculations and rich media content.

MetaCenter users can use Jupyter Notebooks in three flavors:

More information: https://docs.metacentrum.cz/related/jupyter/

b) in Kubernetes: Jupyter can also be run in a Kubernetes cluster. In this case, you also log in using your Metacentrum login credentials.

c) as an application in OnDemand

https://ondemand.grid.cesnet.cz/

AlphaFold

a) CERIT-SC offers access to AlphaFold as a Service in a web browser (as a pre-built Jupyter Notebook).

More information: https://docs.cerit.io/docs/alphafold.html

b) in batch jobs in v OnDemand https://ondemand.grid.cesnet.cz/pun/sys/myjobs/workflows/new

c) in batch jobs using RemoteDesktop and pre-made containers for Singularity

More information: https://docs.metacentrum.cz/software/sw-list/alphafold/

5) Data migration from Archival Storage to Object Storage (DU CESNET)

The archive repository du4.cesnet.cz at MetaCenter connected as storage-du-cesnet.metacentrum.cz is out of warranty and is experiencing a number of technical problems in the tape library mechanics, which does not compromise the stored data itself, but complicates its availability. Colleagues at CESNET Data Storage are preparing to migrate the existing data to a new system (Object Storage).

We now need to dampen the traffic on this repository as much as possible, please

- restrict writes and reads to/from this storage

- do not use data from this storage directly for MetaCenter calculations

If you need the data stored here for calculations, please arrange a priority migration with our colleagues at du-support@cesnet.cz

If, on the other hand, you have data stored here that you no longer plan to use or move (for example, old backups), please also contact colleagues at du-support@cesnet.cz.

Ivana Křenková, Mon Mar 04 15:35:00 CET 2024

SVS FEM (Ansys) invitation

Dear users,

we are forwarding an invitation with courses of SVS FEM (Ansys).

|

|

SVS FEM s.r.o., Trnkova 3104/117c, 628 00 Brno

+420 543 254 554 | http://www.svsfem.cz

Best regards,

Ivana Křenková, Thu Feb 01 23:40:00 CET 2024

Decommission of /storage/brno3-cerit/ and /storage/brno1-cerit/ disk arrays

Due to failure and age, we have recently decommissioned or plan to decommission the oldest CERIT-SC disk arrays in the near future:

- /storage/brno3-cerit/

- /storage/brno1-cerit/

Decomission of /storage/brno3-cerit/

We recently decommissioned the /storage/brno3-cerit/ disk array and moved the data from the /home directories to /storage/brno12-cerit/home/LOGIN/brno3/ (alternatively directly to /home if it was empty on the new repository).

The symlink /storage/brno3-cerit/home/LOGIN/... , which leads to the same data on the new array, remained temporarily functional. From now on, please use the new path to the same data /storage/brno12-cerit/home/LOGIN/...

All data from brno3 is already physically moved to the new field! No need to copy anything anywhere.

Decomission of /storage/brno1-cerit/

In the near future we will start moving data from the /storage/brno1-cerit/ disk array to /storage/brno12-cerit/home/LOGIN/brno1/.

We will move the data at a time when it will not be used in jobs.

Temporarily, the symlink /storage/brno1-cerit/home/LOGIN/... will remain functional, leading to the same data in the new array. This will be deleted when the field is deleted and the data will be available as /storage/brno12-cerit/home/LOGIN/brno1/.

ATTENTION: Please note that the /storage/brno1-cerit/ disk array also contains data from archives of old, long-deleted disk arrays. We do not have plans to transfer data from archives automatically. If you require data from the following archives, please contact us at meta@cesnet.cz, and we will copy the necessary data to /storage/brno12-cerit/:

- /storage/brno4-cerit-hsm/

- /storage/brno7-cerit/

- /storage/jihlava1-cerit/

Result

The disk array /storage/brno12-cerit/ (storage-brno12-cerit.metacentrum.cz) will be the only one connected to MetaCenter from CERIT-SC.

You will find all your data on the /storage/brno12-cerit/home/LOGIN/... disk array, and the symlinks to the old storage will be removed by summer at the latest.

We apologize for any inconvenience and wish you a pleasant day.

Sincerely, MetaCenter.

Ivana Křenková, Fri Jan 19 15:35:00 CET 2024

Invitation to LUMI Intro Course

Dear users,

we are forwarding an invitation with courses in IT4Innovation.

|

||||||||||||

|

||||||||||||

|

Best regards,

Ivana Křenková, Mon Jan 15 23:40:00 CET 2024

MetaCentrum & CERIT-SC infrastructure news

MetaCentrum & CERIT-SC infrastructure news

1) We contributed to the project that won the AI Awards 2023

Researchers from the Department of Cybernetics at the FAV ZČU, who presented at the MetaCenter Grid Workshop in the spring, and with whom we recently did a report on the use of our services, have won the AI Awards 2023. Congratulations!

Our services, in particular the Kubernetes cluster Kubus and its associated disk storage, are also behind the award-winning project of preserving historical heritage and cultural memory by providing access to the NKVD/KGB archive of historical documents.

MetaCentre manages these computing and data resources to solve very demanding tasks in the field of science and research. For more information, see the ZČU press release.

2) We participate in Czech Space Week

Our colleague Zdeněk Šustr is speaking today at the Copernicus forum and Inspirujme se 2023 conference at the Brno Observatory and Planetarium. He will present new services, data and plans for the Sentinel CollGS national node and the GREAT project. The conference is part of the Czech Space Week event and focuses on remote sensing and INSPIRE infrastructure for spatial data sharing.

The GREAT project is funded by the European Union, Digital Europe Programme (DIGITAL - ID: 101083927).

Ivana Křenková, Thu Nov 30 15:35:00 CET 2023

Invitation to autumn HPC courses

Dear users,

we are forwarding an invitation with courses in IT4Innovation.

|

|

|

|

|

|

|

S přáním příjemného počítání,

Ivana Křenková, Wed Jun 14 23:40:00 CEST 2023

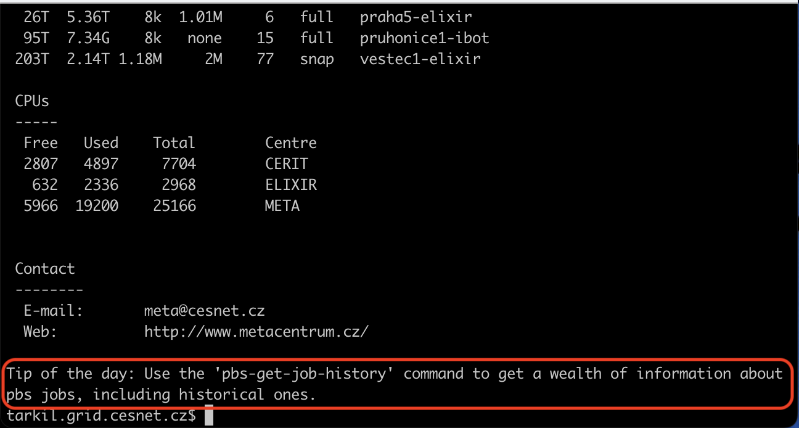

Tips of the day on frontends

Dear users,

Based on the feedback we received from you in the user questionnaire at the turn of the year, we have compiled the most frequent questions into a Tip of the Day.

You will now see a random tip in the form of a short text at the end of the MOTD listing on the frontends when you log in.

You can disable viewing of tips on the selected frontend by using the "touch ~/.hushmotd" command.

With best wishes for a pleasant computing experience,

MetaCentrum

Ivana Křenková, Wed Jun 07 23:40:00 CEST 2023

The most advanced AI system and two new clusters for demanding calculations in MetaCenter

Dear users,

we are pleased to announce that we have acquired some very interesting new HW for MetaCenter.

For more information, please also see the press release e-INFRA CZ "Researchers in the Czech Republic get the most advanced AI system and two new clusters for demanding technical calculations"

1) NVIDIA DGX H100

Masaryk University (CERIT-SC) has become a pioneer in supporting artificial intelligence (AI) and high-performance computing technology with the installation of the latest and most advanced NVIDIA DGX H100 system. This is the first facility of its kind in the entire country (and Europe), bringing extreme computing power and innovative research capabilities.

Featuring the latest NVIDIA Hopper GPU architecture, the DGX H100 features eight advanced NVIDIA H100 Tensor Core GPUs, each with 80GB of GPU memory. This enables parallel processing of huge data volumes and dramatically accelerates computing tasks.

NVIDIA DGX H100 capy.cerit-sc.cz system configuration:

- 8 GPU H100 80GB SXM5

- 135 168 CUDA cores

- 640 GB of GPU memory

- 2 TB RAM memory

- 3.84 TB NVMe for OS

- 30 TB NVMe for data

- Location: Brno (CERIT-SC)

The DGX H100 server comes with a pre-installed software package NVIDIA DGX, which includes a comprehensive set of software tools for deep learning tools, including pre-configured environments.

The machine is available on-demand in a dedicated queue at gpu_dgx@meta-pbs.metacentrum.cz.

To request access, contact meta@cesnet.cz. In your request, describe the reasons for allocating this resource (need and ability to use it effectively). At the same time, briefly describe the expected results, the expected volume of resources and the time scale of the approach needed.

2) TURIN and TYRA clusters

In addition, MetaCenter users can start using two brand new computing clusters acquired by CESNET. The first one has been launched at the Institute of Molecular Genetics of the Academy of Sciences of the Czech Republic in Prague under the name TURIN and the second one at the Institute of Computer Science of Masaryk University in Brno under the name TYRA.

The Prague TURIN cluster has 52 nodes, each with 64 CPU cores and 512 GB of RAM. Its Brno colleague TYRA is composed of 44 nodes and otherwise with identical technical specifications.

Both clusters are equipped with AMD processors along with AMD 3D V-Cache technology. These are the most powerful server processors designed for demanding calculations.

Cluster configurations turin.metacentrum.cz and tyra.metacentrum.cz

- 6144 CPU cores in total

- 96 nodes in total, each with

- 64x AMD EPYC 7543@2.80GHz

- RAM: 512 GB

- Disk: 7TiB NVME

- CESNET owner

- Location: Prague, Brno

- 10Gb uplink to CESNET backbone network

A complete list of currently available computing servers is available at https://metavo.metacentrum.cz/pbsmon2/hardware.

With best wishes for a pleasant computing experience,

MetaCentrum

Ivana Křenková, Mon Jun 05 23:40:00 CEST 2023

New clusters in MetaCentrum

Dear users,

Masaryk University (CERIT-SC) has become a pioneer in the field of artificial Intelligence (AI) and powerful computing technology by installing latest and most advanced NVIDIA DGX H100 system. This is the first facility of its kind in the entire country that delivers extreme computing power and innovative research capabilities.

Thanks to the latest NVIDIA Hopper DGX H100 GPU architecture, it features eight advanced NVIDIA H100 Tensor Core GPUs, each with a GPU 80GB of memory with a total computing power of 32 TeraFLOPS. This enables parallel processing of huge data volumes and significantly accelerates computing tasks. Thanks to the high-performance memory subsystems in the graphics accelerators, it provides fast data access and optimizes performance when working with large data sets. Users can achieve unparalleled efficiency and responsiveness in their AI tasks.

The DGX H100 server comes with a pre-installed software package NVIDIA DGX, which includes a comprehensive set of software tools for deep learning tools, including pre-configured environments.

The machine is available on-demand in a dedicated queue at gpu_dgx@meta-pbs.metacentrum.cz.

To request access, contact meta@cesnet.cz. In your request, describe the reasons for allocating this resource (need and ability to use it effectively). At the same time, briefly describe the expected results, the expected volume of resources and the time scale of the approach needed.

NVIDIA DGX H100 configuration (capy.cerit-sc.cz)

Kompletní seznam aktuálně dostupných výpočetních serverů je na http://metavo.metacentrum.cz/pbsmon2/hardware.

S přáním příjemného počítání,

Ivana Křenková, Thu Jun 01 23:40:00 CEST 2023

New clusters in MetaCentrum

Dear users,

I'm glad to announce you the MetaCentrum's computing capacity was extended with new clusters:

1) CPU cluster turin.metacentrum.cz, 52 nodes, 3328 CPU cores, in each node:

- CPU: 64x AMD EPYC 7543@2.80GHz

- RAM: 512 GiB

- disk: 2x3.84 TiB NVME

- Net: Ethernet 10 Gbit/s

- OS: Debian 11

- performence of each node: SPECrate 2017_fp_base = 516

- owner CESNET, location Prague

2) CPU cluster tyra.metacentrum.cz, 44 nodes, 2816 CPU cores, in each node::

- CPU: 64x AMD EPYC 7543@2.80GHz

- RAM: 512 GiB

- disk: 2x3.84 TiB NVME

- Net: Ethernet 10 Gbit/s

- OS: Debian 11

- performence of each node: SPECrate 2017_fp_base = 516

- owner CESNET, location Brno

Both clusters can be accessed via the conventional job submission through PBS batch system (@pbs-meta server) in short default queues. Longer queues will be added after testing.

For complete list of available HW in MetaCentrum see http://metavo.metacentrum.cz/pbsmon2/hardware

MetaCentrum

Ivana Křenková, Fri May 19 23:40:00 CEST 2023

MetaCentrum user documentation is moving

Dear users,

We have prepared new MetaCenter documentation for you, which is available at https://docs.metacentrum.cz/ .

We have structured the content according to the topics you are interested in, which you can find in the top bar. After clicking on the selected topic, the help menu on the left will appear with further navigation. On the right is the table of contents with the topics on the page.

We have included the feedback you sent us in the questionnaire into the documentation (thank you). For example, we cleaned up a lot of outdated information that remained traceable in the wiki and tried to make the tutorial examples clearer.

Because of the ability to trace back information, the original documentation will not be deleted immediately, but will remain temporarily accessible. However, it has not been updated since the end of March 2023!

Why did we choose a different documentation format and leave the wiki?

As you know, we are in the process of integrating our services into a single e-INFRA CZ* platform. Part of this integration is the unification of the format of all user documentation. In the future, we will integrate our new documentation into the common documentation of all services provided as part of e-INFRA CZ activities https://docs.e-infra.cz/.

-----

* e-INFRA CZ is an infrastructure for science and research that connects and coordinates the activities of three Czech e-infrastructures: the CESNET, CERIT-SC and IT4Innovations. More information can be found on the e-INFRA CZ homepage https://www.e-infra.cz/.

-----

The new documentation is still undergoing development and changes. In case you encounter any problems, uncertainties or miss something, please let us know at meta@cesnet.cz . We are already thinking how to make the section of the documentation dedicated to software installations even better for you.

Sincerely,

MetaCenter team

Ivana Křenková, Mon Apr 03 21:39:00 CEST 2023

Open Access Grant Competition of IT4Innovations National Supercomputing Center

Dear users,

we would like to forward information about the grant competition:

|

||||

|

Ivana Křenková, Thu Mar 30 21:39:00 CEST 2023

Invitation to the course: Introduction to MPI

Dear users,

let us invite resend you the following invitation

--

Dear Madam / Sir,

The Czech National Competence Center in HPC is inviting you to a course Introduction to MPI, which will be held hybrid (online and onsite) on 30–31 May 2023.

Message Passing Interface (MPI) is a dominant programming model on clusters and distributed memory architectures. This course is focused on its basic concepts such as exchanging data by point-to-point and collective operations. Attendees will be able to immediately test and understand these constructs in hands-on sessions. After the course, attendees should be able to understand MPI applications and write their own code.

Introduction to MPI

Date: 30–31 May 2023, 9 am to 4 pm

Registration deadline: 23 May 2023

Venue: online via Zoom, onsite at IT4Innovations, Studentská 6231/1B, 708 00 Ostrava–Poruba, Czech Republic

Tutors: Ondřej Meca, Kristian Kadlubiak

Language: English

Web page: https://events.it4i.cz/event/165/

Please do not hesitate to contact us might you have any questions. Please write us at training@it4i.cz.

We are looking forward to meeting you online and onside.

Best regards,

Training Team IT4Innovations

training@it4i.cz

Ivana Křenková, Tue Mar 14 21:39:00 CET 2023

Invitation to the Grid Cmputing Workshop 2023 - MetaCentrum

Dear users,

We would like to invite you to the traditional MetaCenter Seminar for all users, which will take place in Prague on 12th and 13th April 2023.

Together with EOSC CZ, we have prepared a rich program that may be of interest to you.

The first day of the event will be devoted to EOSC CZ activities, especially the preparation of a national repository platform and storage/archiving of research data in the Czech Republic.

The second day will be devoted to the Grid Computing 2023 Workshop, which will be focused on the presentation of the novelties and new services offered by MetaCentre.

These will include Singularity containers, NVIDIA framework for AI, Galaxy, graphical environments in OnDemand and Kubernetes, Jupyter Notebooks, Matlab (invited talk) and many more. In the afternoon, there will be an optional Hands-on workshop with limited capacity, where you can learn a lot of interesting things and try out the topics you are interested in under the guidance of our experts.

As we want the Workshop to meet your needs, we would be very happy if you could let us know which topics you are interested in and what you would like to try. We will try to include them in the program. Please send your suggestions to meta@cesnet.cz.

For more information about the event, please visit the seminar page: https://metavo.metacentrum.cz/cs/seminars/index.html

We look forward to your participation! The seminar will be held in Czech language. We will inform you about the opening of registration.

Yours MetaCentrum

Ivana Křenková, Tue Mar 14 21:39:00 CET 2023

The new way of calculating fairshare

Dear users,

We would like to inform you that starting from Thursday, March 9th, 2023, we are changing the method of calculating fairshare. We are adding a new coefficient called "spec", which takes into account the speed of the computing node on which your job is running.

Until now, "usage fairshare" was calculated as usage = used_walltime*PE , where "PE" represents processor equivalents expressing how many resources (ncpus, mem, scratch, gpu...) the user allocated on the machine.

From now on it will be calculated as usage = spec*used_walltime*PE , where "spec" denotes the standard specification of the main node (spec per cpu) on which job is running. This coefficient takes values from 3 to 10.

We hope that this change will allow you to use our computing resources even more efficiently. If you have any questions, please do not hesitate to contact us.

Ivana Křenková, Tue Mar 07 21:39:00 CET 2023

New version of graphical environment OnDemand

Dear users,

We have prepared a new version of the Open OnDemand graphical environment.

Open OnDemand https://ondemand.metacentrum.cz is a service that enables users to access computational resources via web browser in graphical mode.

User may start common PBS jobs, get access to frontend terminals, copy files between our storages or run several graphical applications in browser. Among the most used applications available are Matlab, ANSYS, MetaCentrum Remote Desktop and VMD (see full list of GUI applications available via OnDemand). The graphical sessions are persistent, you can access them from different computers in different times or even simultaneously.

The login and password to Open OnDemand V2 interface is your e-INFRA CZ / Metacentrum login and Metacentrum password.

More information can be found in the documentation on the wiki https://wiki.metacentrum.cz/wiki/OnDemand

Ivana Křenková, Mon Feb 13 21:39:00 CET 2023

Invitation to the course: High Performance Data Analysis with R

Dear users,

let us invite resend you the following invitation

--

Dear Madam / Sir,

The Czech National Competence Center in HPC is inviting you to a course High Performance Data Analysis with R, which will be held hybrid (online and onsite) on 26–27 April 2023.

This course is focused on data analysis and modeling in R statistical programming language. The first day of the course will introduce how to approach a new dataset to understand the data and its features better. Modeling based on the modern set of packages jointly called TidyModels will be shown afterward. This set of packages strives to make the modeling in R as simple and as reproducible as possible.

The second day is focused on increasing computation efficiency by introducing Rcpp for seamless integration of C++ code into R code. A simple example of CUDA usage with Rcpp will be shown. In the afternoon, the section on parallelization of the code with future and/or MPI will be presented.

High Performance Data Analysis with R

Date: 26–27 April 2023, 9 am to 5 pm

Registration deadline: 20 April 2023

Venue: online via Zoom, onsite at IT4Innovations, Studentská 6231/1B, 708 00 Ostrava – Poruba, Czech Republic

Tutor: Tomáš Martinovič

Language: English

Web page: https://events.it4i.cz/event/163/

Please do not hesitate to contact us might you have any questions. Please write us at training@it4i.cz.

We are looking forward to meeting you online and onside.

Best regards,

Training Team NCC Czech Republic

training@it4i.cz

Ivana Křenková, Tue Jan 31 21:39:00 CET 2023

Providing feedback on MetaCenter services

Dear users,

We would like to hear what you think about the services we are providing.

Please find approx. 15 minutes to complete the feedback form to provide us with the valuable information necessary to advance our services.

We understand that your time spent on this questionnaire is valuable and therefore everybody who completes the form and has a filled e-INFRA CZ login will receive a reward from us in the form of 0.5 impacted publication in the Grid service.

Feedback form (please choose any language option):

Thank you for your feedback. We wish you many successes and that everything is going well in 2023.

Your MetaCentrum

Ivana Křenková, Tue Jan 10 10:40:00 CET 2023

New queue uv18.cerit-pbs.cerit-sc.cz on ursa node

Dear users,

Due to the optimization of the NUMA system of the ursa server, the uv18.cerit-pbs.cerit-sc.cz queue has been introduced, which allows to allocate processors only in 18 subsets, so that the entire NUMA node is always used and there is no significant slowdown of the computation when unnecessarily allocating the task to multiple NUMA nodes.

The queue therefore accepts jobs in multiples of 18 CPU cores and has a high priority.

Best regards,

Your Metacentrum

Ivana Křenková, Tue Nov 29 10:40:00 CET 2022

New parameter in PBS: spec

Dear users,

it is now possible upon submission of computational job to define minimal CPU speed of the computing node, i.e. to make sure that the computing node the job will run on will have CPU of defined speed or faster. For this purpose a new PBS parameter spec is used. It's numerical value is obtained by methodology of https://www.spec.org/. To learn more about spec parameter usage, visit our wiki at https://wiki.metacentrum.cz/wiki/About_scheduling_system#CPU_speed.

Setting up requirement on CPU speed can make the job run faster, but it will on the other hand limit the number of machines the job has at it's disposal, which can result in longer queuing times. Please bear this in mind while using the spec parameter.

Best regards,

your Metacentrum

Ivana Křenková, Mon Aug 29 10:40:00 CEST 2022

Weak user passwords' audit result

Dear Madam/Sir,

As part of the MetaCenter infrastructure security audit, we identified

several weak user passwords. To ensure sufficient protection

of the MetaCenter environment, the appropriate users will need to change

their password on the MetaCenter portal

(https://metavo.metacentrum.cz/cs/myaccount/heslo.html).

The concerned users will be contacted directly.

Should you have any questions, please contact mailto:support@metacentrum.cz

Yours,

MetaCentrum

Ivana Křenková, Fri Aug 12 21:40:00 CEST 2022

Operational news of the MetaCentrum & CERIT-SC infrastructures

We would like to inform users about several new features in the MetaCentrum & CERIT-SC infrastructures:

1) Browser access to GUI applications

It is possible for users to access GUI applications simply through a web browser. For deatiled information see https://wiki.metacentrum.cz/wiki/Remote_desktop#Quick_start_I_-_Run_GUI_desktop_in_a_web_browser.

The access through VNC client (an older and more complicated way to get GUI) remains unchanged - see https://wiki.metacentrum.cz/wiki/Remote_desktop#Quick_start_II_-_Run_GUI_desktop_in_a_VNC_session and following tutorials.

2) History of finished jobs

As a new feature users can now fetch data from finished jobs, including those that finished more than 24 hours ago. For this, use command

pbs-get-job-history <job_id>

If the job is found in the archive, the command will create in current dir a new subdirectory called job_ID (e.g. 11808203.meta-pbs.metacentrum.cz) with several files. Namely, there will be

job_ID.SC - a copy of batch script as passed to qsub

job_ID.ER - standard output (STDOUT) of a job

job_ID.OU - standard error output (STDERR) of a job

For detailed information see https://wiki.metacentrum.cz/wiki/PBS_get_job_history

3) Setting up minimal required memory on GPU card

As a new feature users can now specify a minimum amount of memory the GPU card needs to have. For this there is a new PBS parameter gpu_mem. For example, the command

qsub -q gpu -l select=1:ncpus=2:ngpus=1:mem=10gb:scratch_local=10gb:gpu_mem=10gb -l walltime=24:0:0

makes sure that the GPU card on computational node will have at least 10 GB of memory.

For more information see https://wiki.metacentrum.cz/wiki/GPU_clusters.

We would also like to note that it is better to select GPU machine by specifying the gpu_mem and cuda_cap parameters than by specifying a particular cluster. The former way includes wider set of machines and therefore the shortens the queuing time of jobs.

Ivana Křenková, Thu Aug 11 15:35:00 CEST 2022

ESFRI Open Session Invitation

Dear Madam/Sir,

We resend you the invitation for ESFRI Open Session

--

Dear All,

I am pleased to invite you to the 3rd ESFRI Open Session, with the leading theme Research Infrastructures and Big Data. The event will take place on June 30th 2022, from 13:00 until 14:30 CEST and will be fully virtual. The event will feature a short presentation from the Chair on recent ESFRI activities, followed by presentations from 6 Research infrastructures on the theme and there will also be an opportunity for discussion. The detailed agenda of the 3rd Open Session will soon be available via the event webpage.

ESFRI holds Open Sessions at its plenary meetings twice a year, to communicate to a wider audience about its activities. They are intended to serve both the ESFRI Delegates and representatives of the Research Infrastructures community, and facilitate both-ways exchange. ESFRI has launched the Open Session initiative as a part of the goals set within the ESFRI White Paper - Making Science Happen.

I would like to inform you that the Open Session will be recorded and will be at your disposal at our ESFRI YouTube channel. The recordings from the previous Open Sessions themed around the ESFRI RIs response to the COVID-19 pandemic, and the European Green Deal, are available here.

Please forward this invitation to your colleagues in the EU Research & Innovation ecosystem that you deem would benefit from the event.

Registration is mandatory for participation, and should be done via the following link:

https://us06web.zoom.us/webinar/register/WN_0-sM43ktT3mPuCzXi3KNdQ

Your attendance at the Open Session will be highly appreciated.

Sincerely,

Jana Kolar,

ESFRI Chair

Ivana Křenková, Mon Jun 20 21:40:00 CEST 2022

MetaCenter grid seminar 2022 invitation

Dear users,

We would like to invite you to attend the Grid Computing Seminar - MetaCentre 2022, which will take place on 10 May 2022 in Prague at the Diplomat Hotel.

The seminar is part of the e-Infrastructure Conference e-INFRA CZ 2022 https://www.e-infra.cz/konference-e-infra-cz and will be held in the Czech language.

We would like to introduce you to the e-INFRA CZ infrastructure, its services, international projects and research activities. We will introduce you to the latest news and outline our plans.

In the afternoon programme we will offer two parallel sessions. One will focus on network development, security and multimedia and the other on data processing and storage - MetaCentre Grid Computing Seminar 2022.

In the evening, interested parties can then attend a bonus session, Grid Service MetaCentrum - Best Practices, followed by a free discussion on topics that interest you and keep you awake.

For more information, agenda and registration, visit the event page at https://metavo.metacentrum.cz/cs/seminars/seminar2022/index.html

We look forward to seeing you,

Yours MetaCenter

Ivana Křenková, Mon Apr 18 21:40:00 CEST 2022

New clusters in MetaCentrum

Dear users,

I'm glad to announce you the MetaCentrum's computing capacity was extended with new clusters:

1) GPU cluster

galdor.metacentrum.cz CESNET owner, 20 nodes, 1280 CPU cores aand 80x GPU NVIDIA A40, in each node:

- CPU: 64x AMD EPYC 7543

- RAM: 512 GiB

- GPU: 4x NVIDIA A40

- disk: 2x7.68 TiB NVME

- Net: x Ethernet 10 Gbit/s

- OS: Debian 11

- performence of each node: SPECfp2017: 513 (8 per core)

The cluster can be accessed via the conventional job submission through PBS batch system (@pbs-meta server) in gpu priority and short default queues.

On GPU clusters, it is possible to use Docker images from NVIDIA GPU Cloud (NGC) - the most used environment for the development of machine learning and deep learning applications, HPC applications or visualization accelerated by NVIDIA GPU cards. Deploying these applications is then a matter of copying the link to the appropriate Docker image, running it in the Docker container in Singularity. More information can be found at https://wiki.metacentrum.cz/wiki/NVidia_deep_learning_frameworks

2) CPU cluster

halmir.metacentrum.cz CESNET, 31 nodes, 1984 CPU cores, in each node:

- CPU: 64x AMD EPYC 7543

- RAM: 1024 GiB

- disk: 2x7.68 TiB NVME

- Net: Ethernet 10 Gbit/s

- OS: Debian 11

- performence of each node: SPECfp2017: 513 (8 per core)

The cluster can be accessed via the conventional job submission through PBS batch system (@pbs-meta server) in short default queues. Longer queues will be added after testing.

We continuously solve problems with the compatibility of some applications with the Debian11 OS by recompiling new SW modules. If you encounter a problem with your application, try adding the debian10-compat module at the beginning of the startup script. If the problems persist, let us know at meta (at) cesnet.cz.

For complete list of available HW in MetaCentrum see http://metavo.metacentrum.cz/pbsmon2/hardware

MetaCentrum

Ivana Křenková, Fri Mar 11 23:40:00 CET 2022

Kubernetes webinar invitation

Dear users,

we invite you to the webinar Introduction of Kubernetes as another computing platform available to MetaCentrum users

What you will learn

- Introduction to Kubernetes. [PDF]

- Web with examples (in Czech) https://docs.cerit.io/docs/webinar1.html

- Web applications launched from Kubernetes - Jupyter and Binder Hub.

- Available applications for interactive work - Ansys, Matlab, RStudio.

- We will show some in a practical example.

- Example of running a custom application.

The technical requirements

- A standard browser is sufficient for web applications.

- To try Ansys / Matlab you need vncviewer (realvnc, turbovnc), for Mac OS users just need Safari browser.